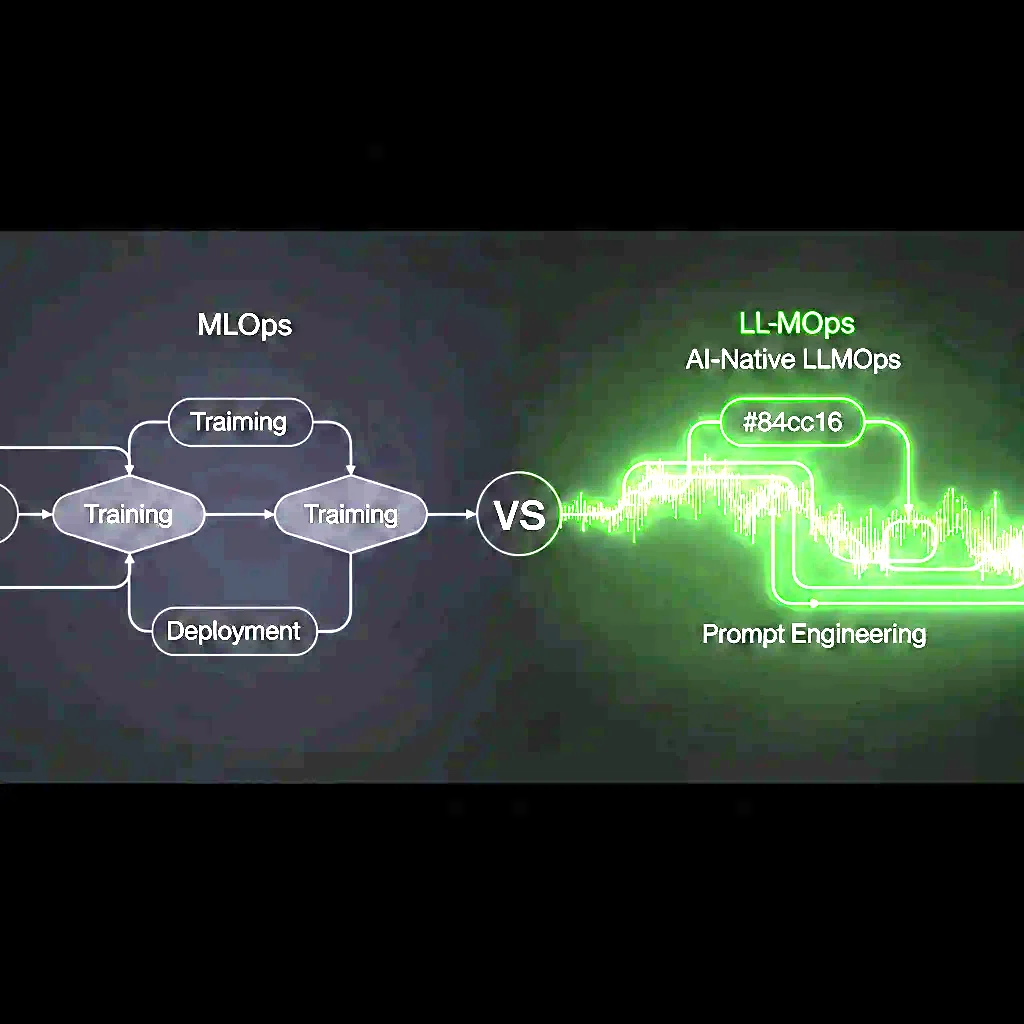

LLMOps vs MLOps: How AI-Native DevOps Changes Your Pipeline

The difference between MLOps and LLMOps, why traditional ML pipelines don't work for LLM applications, and what AI-native DevOps infrastructure looks like for UAE engineering teams.

Every UAE engineering team building AI products is hitting the same infrastructure problem: the MLOps playbook - the set of practices for training, versioning, deploying, and monitoring ML models - was designed for a different type of model than the LLMs dominating today’s AI product landscape. LLMOps is the emerging set of practices for operating large language model applications in production, and it differs from MLOps in ways that matter for your infrastructure.

What MLOps Was Built For

Traditional MLOps addresses the lifecycle of classical ML models: supervised learning models (classification, regression, ranking), tabular data pipelines, and models with training cycles measured in hours or days. The core MLOps concerns are:

- Experiment tracking - logging hyperparameters, metrics, and artifacts across training runs (MLflow, Weights & Biases)

- Data versioning - tracking the dataset used for each model version (DVC)

- Model registry - storing, versioning, and promoting model artifacts

- Model serving - deploying the model as an API (Triton, BentoML, Seldon)

- Model monitoring - detecting data drift and concept drift in production

These concerns don’t go away with LLMs. But LLMs introduce an entirely new layer of operational complexity.

Where LLMs Break MLOps Assumptions

Assumption 1: You train your own model. Classical MLOps assumes you train the model from scratch or fine-tune from a base model. With LLMs, most UAE product teams are using foundation model APIs (OpenAI, Anthropic, Cohere, Google Gemini) or open-source models (Llama, Mistral) hosted on their own infrastructure. You’re not training - you’re prompting, RAG-ing, and fine-tuning at the margin. The MLOps training pipeline is either absent or a small part of the stack.

Assumption 2: Model quality is measurable with standard metrics. Classical ML models have well-defined evaluation metrics (accuracy, AUC-ROC, F1). LLM outputs are text - evaluating whether a generated response is “good” requires LLM-as-judge evaluation, human feedback, or task-specific automated metrics. The evaluation infrastructure required is completely different.

Assumption 3: Inference cost is fixed. Classical ML models have predictable inference costs (compute time per request, roughly proportional to model size). LLM inference costs depend on token count - both input (prompt + context) and output (generated response). A RAG pipeline that retrieves 10 documents and appends them to the prompt before sending to GPT-4 has a very different cost profile than the same pipeline retrieving 2 documents. Monitoring and optimising token costs is a first-class LLMOps concern that doesn’t exist in classical MLOps.

Assumption 4: Model versions are self-contained. When you update a classical ML model, you replace one artifact with another. LLM application quality depends on the model, but also on prompt templates, retrieval configuration, chunking strategy, and context management - all of which interact. Versioning an LLM application means versioning all of these together, not just the model.

The LLMOps Stack for UAE Engineering Teams

A production-grade LLMOps pipeline for a UAE company building on LLMs looks like this:

Prompt management: Version-controlled prompt templates with automated evaluation (LangSmith, PromptLayer, or custom). Every prompt change is a PR. Every deployment tests the prompt against a golden dataset.

RAG pipeline observability: If you’re using retrieval-augmented generation, you need visibility into retrieval quality (are the right documents being retrieved?), context utilisation (how much of the retrieved context is the model actually using?), and latency breakdown (how long is retrieval vs. generation?).

Token cost monitoring: Per-request token counts, cost attribution by user/feature/customer, and budget alerts. For UAE SaaS products charging per-seat, LLM cost per customer is a critical unit economics metric.

Model serving: For teams self-hosting open-source LLMs (Llama 3, Mistral, Phi-3), vLLM is the standard serving framework - it implements PagedAttention for GPU memory efficiency and continuous batching for throughput. For teams using API-based models, a model gateway (LiteLLM) provides fallback routing, load balancing, and cost tracking across providers.

Safety and compliance: UAE companies handling regulated data (financial, health, personal) need PII detection and redaction in LLM pipelines - both in inputs (before sending to external APIs) and outputs (before returning to users). This is non-negotiable for CBUAE, DHA, or DFSA-regulated products.

What This Means for Your DevOps Team

If your DevOps team’s LLM experience is “we set up the API key and it works,” you’re missing the operational infrastructure that keeps LLM products reliable and cost-effective at scale.

devopsuae.com specialises in AI-native DevOps - building the infrastructure layer that makes LLM applications production-ready. From GPU-aware Kubernetes for self-hosted models to cost monitoring dashboards for API-based applications, we build what your AI team needs to ship and operate with confidence.

Contact us to discuss your AI infrastructure needs.

Get Started for Free

Schedule a free consultation. 30-minute call, actionable results in days.

Talk to an Expert